Eighty Queries and a Four-Second Page: The Access Database Is Back

How AI coding tools eliminated the only design review most software ever got — the slowness of writing it by hand.

I built a small web application over a few days. A data-heavy dashboard, the kind that aggregates metrics from several sources and renders them in a layout that makes sense to a human. I’d think of a requirement, describe it, and the AI would implement it while I moved on to the next thought. Need user activity? Done. Billing summary? Done. Agent metrics? Done. Clean components. Sensible naming. The kind of code you’d glance at in a review and approve without comment.

The page took four seconds to render.

I opened the network panel and found eighty database queries. Nested loops calling the database inside other loops calling the database. Each query was correct. Each returned exactly the data I’d asked for across those few days of building. Each had been generated independently, solving one small problem at a time. None of them knew that seventy-nine other responses were accumulating behind the same page load.

The sequence of asks, spread over days, had produced the same outcome as no design at all. Every requirement I thought of got implemented. The one I didn’t think of, how these eighty individual responses should compose into a single performant page, never entered the conversation. A few well-crafted queries would have replaced all eighty. I made that decision on none of those days. The tool never surfaced the need. The process of building incrementally never forced it.

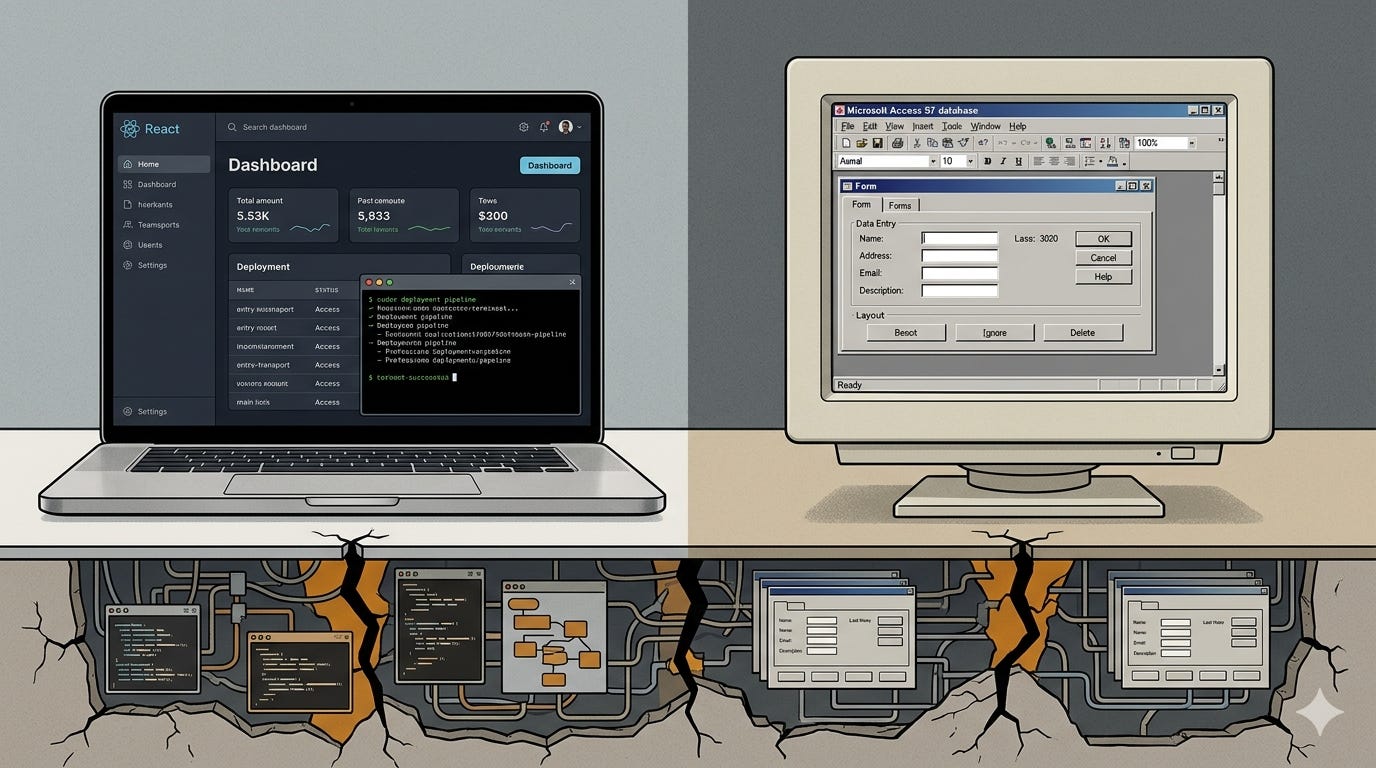

Staring at those eighty queries, I had a sudden flash of recognition. I’d seen this before. Not the specific bug, but the shape of the failure. In the late nineties, Microsoft FrontPage let anyone build a website and Access let anyone build a database. Combined, they produced something that looked like a web application. FrontPage even auto-generated the server-side code to connect them. Code generation in the late nineties. The applications looked professional. They worked on the day they were built. They fell apart the moment they had to scale, integrate, or survive concurrent users. The tools made creation easy and made design invisible. I was looking at the same pattern wearing a React component.

The page worked exactly as requested. It failed at everything I didn’t request. That gap, between what gets asked and what a production system requires, is where an entire generation of software is failing.

There is an engineer in your organization who has spent a career learning what breaks. Connection pooling. Transaction isolation. Graceful degradation. Circuit breakers. Input validation at every trust boundary. They’ve been paged at 3 AM enough times to carry a catalog of failure modes that no training dataset contains.

Your team adopted an AI coding assistant six months ago. Feature velocity doubled. The backlog is shrinking faster than it ever has. Sprint demos are impressive. Leadership is thrilled.

The codebase tells a different story. It has grown 40 percent in six months. Test coverage has not grown with it. Error handling follows no consistent pattern — some modules retry on failure, some throw unhandled exceptions, some silently swallow errors and return empty results. There is no input validation at the API layer because the frontend validates, except for two endpoints added last month that bypass the frontend entirely. Three separate implementations of the same date-formatting logic sit in three different modules, each subtly different.

Your experienced engineer knows what needs to happen. Refactor the duplicate logic. Add integration tests. Standardize the error-handling pattern. Validate inputs at the API boundary. These are not exotic requirements.

None of them are in the sprint.

Performance tuning is in the backlog, prioritized behind four feature stories. Test coverage is a tech debt ticket rescheduled three times. Error handling standardization was discussed in a retro two months ago and assigned to nobody. Input validation at the API layer is the platform team’s responsibility. The platform team has their own backlog.

But the sprint allocates time for answers, not for questions nobody typed into the ticket. The work that matters is in the backlog, or it’s another team’s problem, or it’s planned for a future sprint that keeps getting pushed. And so the questions go unasked.

Two failures that look like opposite problems. I asked for every feature I could think of, over days, and the system-level design never entered the conversation. Your engineer carries the system-level knowledge but works inside a process that has no structural place for applying it. The knowledge exists. The process doesn’t reach for it.

The output is the same: functional code without design. Software that does what was requested and fails at everything that wasn’t.

In 1986, Fred Brooks published “No Silver Bullet,” an essay that predicted, with uncomfortable precision, the failure mode playing out across the software industry right now.

Brooks argued that software difficulty has two components. Accidental complexity is the difficulty of expressing your design in code: syntax, compilation, boilerplate, deployment. Essential complexity is the difficulty of deciding what the software should do, how it should fail, what it should guarantee, how the parts compose into a whole. Every generation of tooling promises to make software easier by attacking accidental complexity. Structured programming. Object orientation. Fourth-generation languages. Each one made code easier to write. None of them touched the essential complexity — the work of deciding what the system should be.

No tool in the history of software engineering has reduced accidental complexity as dramatically as AI code generation. Code that took days now takes minutes. Brooks would have admired the achievement. He also would have recognized what it doesn’t solve. Deciding that a page should load in under 200 milliseconds is essential complexity. Writing the query that achieves it is accidental. Recognizing that eighty isolated queries will never meet a performance target is essential. Generating each of those eighty queries is accidental.

AI eliminated the accidental complexity so thoroughly that the essential complexity has become invisible. The code compiles, runs, returns the right results. The decisions that would make it perform, scale, and survive are still required. But the step that used to force engineers to confront them, the slow act of writing code by hand, no longer exists.

Researchers at Carnegie Mellon studied 807 open-source repositories that adopted Cursor and compared them against 1,380 matched controls that didn’t. The velocity spike was real: lines of code jumped roughly 3-5x in the first month. By the third month, the speed boost had vanished. What remained was the damage. Static analysis warnings rose approximately 30 percent and stayed elevated. Code complexity climbed 41 percent. The extra complexity then slowed teams down, creating a feedback loop where degraded quality eroded the speed gains that justified adoption in the first place. The paper’s title states the finding plainly: speed at the cost of quality.

GitClear’s 2026 cohort study measured both. Across 2,172 developer-weeks, using data pulled directly from Cursor, GitHub Copilot, and Claude Code APIs, they found that power users produce four to ten times more code than non-users. They also found that code churn among the heaviest AI users was nine times higher. Test coverage went up. So did duplication, short-lived code, and the rate at which newly written lines had to be revised or discarded within weeks. The tools generated more output and more waste simultaneously. My eighty-query page is what that looks like at the level of a single feature. Two independent studies, across thousands of repositories, confirm what it looks like at the level of an industry.

This has happened before.

In the late 1990s, Microsoft Access gave non-technical users the power to build applications. A department coordinator could create a database with forms, queries, and reports. It stored data. It had a UI. It worked. Departments ran on Access databases: tracking inventory, managing schedules, processing invoices, sometimes running payroll.

They all failed along the same fault lines.

No concurrency model. Two users editing the same record at the same time corrupted data in ways that surfaced weeks later. Data integrity constraints were absent. Fields that should have been required weren’t, and relationships between tables were suggestions. When the department outgrew the application, there was no migration path; rebuilding from scratch was the only option. No security architecture. The database file sat on a shared drive, open to anyone with network access.

The tool made creation easy. The design work that mattered (concurrency, integrity, migration, security) stayed invisible until it was too late. The application worked on the day it was built and accumulated structural failures that only became visible when it had to scale, integrate, change, or survive a bad day.

Vibe-coded applications in 2026 break in the same places. The UI is React instead of Access forms. The data layer is PostgreSQL instead of Jet. The stack looks modern. Underneath, the same absence: no concurrency model, no failure mode design, no security architecture, no plan for what happens when the system has to do something its creator didn’t explicitly request.

But the Access era had one limiting factor. The results were obviously not production systems. An .mdb file on a shared drive looked exactly like what it was. When it broke, the damage stayed local. One department, one workflow, one bad Monday.

The AI era has erased that visibility. A vibe-coded application looks identical to a production system. The UI is modern. The stack is contemporary. The deployment pipeline is real. Nothing about the output signals that nobody designed it. The PM’s weekend prototype and the engineer’s production feature come out of the same tool, in the same stack, with the same professional sheen. Neither one looks like an Access database. Both can have the same structural absence underneath.

For twenty years, the slowness of writing code served as an unintentional design review. An engineer writing a database query by hand would notice, on the third query for the same page, that a pattern was emerging. They’d stop. Refactor. Join the queries.

AI removed that pause. The organizational process that relied on it — user stories, acceptance criteria, sprint boundaries — was never designed to carry the design load. The user story says what the user should be able to do. It says nothing about response time under load, behavior during partial failures, or input validation at trust boundaries.

Those properties had a name long before agile, long before AI. Non-functional requirements. The performance, reliability, security, scalability, observability, and maintainability characteristics that determine whether software works or merely runs.

Quality used to cost time, and time was the scarcest resource in software.

AI just eliminated that tradeoff. The tool that generates eighty queries can also generate the integration tests, the input validation, the error handling, the parameterized queries. In the same afternoon. The cost of doing it right just converged with the cost of doing it wrong. Which means every time a team ships without non-functional requirements covered, that’s no longer a resource constraint. It’s a leadership choice. The backlog full of deferred quality work isn’t a prioritization problem anymore. It’s a decision to leave blank a field that would cost almost nothing to fill.

Development cost collapsed. Operational cost didn’t. Compute, database bills, incident response, customer churn from outages, security breach liability — none of those got cheaper. They arguably got more expensive, because near-free development means more software running in more places with less scrutiny. The bill doesn’t come due when you write the code. It comes due when the code has to work.

The governance structures of the pre-AI era — review boards, approval workflows, committees that meet on Tuesdays — were designed to slow teams down. That was the mechanism: add friction, force deliberation. In a world where AI generates production-ready code in minutes, friction-based governance doesn’t scale. It either becomes a bottleneck that teams route around or a rubber stamp that catches nothing. What’s needed is a different mechanism entirely. The replacement for friction-based governance is specification-based governance: defining what the work must satisfy before it ships.

The fix is putting the questions into the artifacts the AI already reads.

Start with the acceptance criteria. “The user can view their dashboard” is a feature requirement. It tells the AI what to build. It says nothing about how the system should behave. Extend it: the dashboard renders in under 200 milliseconds at the 95th percentile. The page makes no more than five database round trips. All data access uses parameterized queries. Errors from upstream services produce a degraded view, not a blank screen. These are not aspirational goals stapled to the end of a ticket. They are testable attributes that the AI can implement directly, if they are in the prompt.

A test that asserts response time under concurrent load asks about performance. One that sends malformed input to the API covers validation. One that simulates an upstream service timeout addresses resilience. If AI makes test-writing cheap, tests become the natural vehicle for encoding what experienced engineers used to carry in their heads. Write the test before the implementation prompt. The test suite becomes the design document: a machine-executable specification the system must satisfy. The implementation can be regenerated. The specification is what survives.

Extend the definition of done. A feature is not complete when it passes its own acceptance criteria. It is complete when the non-functional requirements have been verified for the surface it touches. Response time. Error rates. Resource utilization. Security scan. Input validation coverage. These aren’t separate workstreams owned by separate teams and prioritized in separate backlogs. They are attributes of the feature, as fundamental as returning the right data. The AI can run the performance test, execute the security scan, check the query count. It simply needs the prompt.

This is not new discipline. It is old discipline made newly urgent. Performance, reliability, security, observability, maintainability. Engineers have always known they mattered. The difference is that engineers used to encounter them naturally, in the time it took to write the code. That time is gone. The knowledge remains, but the moment in which it was applied has vanished. These process changes are the explicit replacements for the implicit design review that implementation friction used to provide.

An honest caveat: everything above works for teams that have the knowledge. The experienced engineer who knows what to ask now has a process that asks it. For the non-engineer who builds an application in a weekend, the gap is harder to close. You can hand someone a list of non-functional requirements. The list can name the questions. What it can’t teach is the judgment underneath, the part that knows which tradeoff matters for this system at this scale, that knows when “good enough” is genuinely good enough and when it’s a time bomb. That judgment comes from production scars, not checklists. An organization that defines what done means, with measurable system attributes, will catch the eighty-query page before it ships. An organization that doesn’t will discover it the way department coordinators discovered their Access databases couldn’t handle concurrent users: on the worst possible Monday.

Brooks was right in 1986. The essential complexity of software — deciding what to build, how it should fail, what it must guarantee — has never been solved by a tool and won’t be. What has changed is that the accidental complexity, the code itself, is now so cheap that it no longer serves as a reminder that the essential questions exist. The questions are the same ones they have always been. The process that used to ask them by accident no longer does.

The tools are better now. The absence is the same.

One more absence worth naming: we have SBOMs to declare what’s inside software, and OpenSSF Scorecards to assess security practices. We have nothing that declares what the software was built to do — single-user or multi-tenant, horizontally scalable or not, root or least-privilege. The Software Architecture Manifest (SAM) is a v0 working draft of what that signal could look like: a producer-signed, machine-readable declaration of architectural intent and operational boundaries. SBOM says what’s inside. SLSA says how it was built. SAM says what it was built to do. Disclosure: I built SAM as a potential solution to the problem this article describes. It is open source, open for contribution, and has no commercial interest behind it.

The questions experienced engineers carry in their heads shouldn’t stay there. I’ve published a living reference of the non-functional requirements - for your agent, that separate software that runs from software that works. It’s open for contributions. The tree grows when engineers add the questions their production systems taught them.