Your Tests Work. They're Testing the Wrong Things.

CrowdStrike had the testing infrastructure. They didn't have the failure scenarios to feed it. Neither do you.

On the morning of July 19, 2024, Dr. Rian Kabir walked into his outpatient mental health clinic at the University of Louisville and found every single computer dark. He couldn’t pull up patient records. He couldn’t access his drug monitoring program. He couldn’t submit a prescription to a pharmacy. His team did what medical staff used to do a century ago: they picked up pens and started writing everything by hand. Across the country, in Paducah, Kentucky, Gary Baulos — 73, scheduled for open-heart surgery to clear eight blockages and repair an aneurysm — was told the operation was canceled. His daughter would later say that it was scary knowing your loved one had an issue that warranted getting in right away.

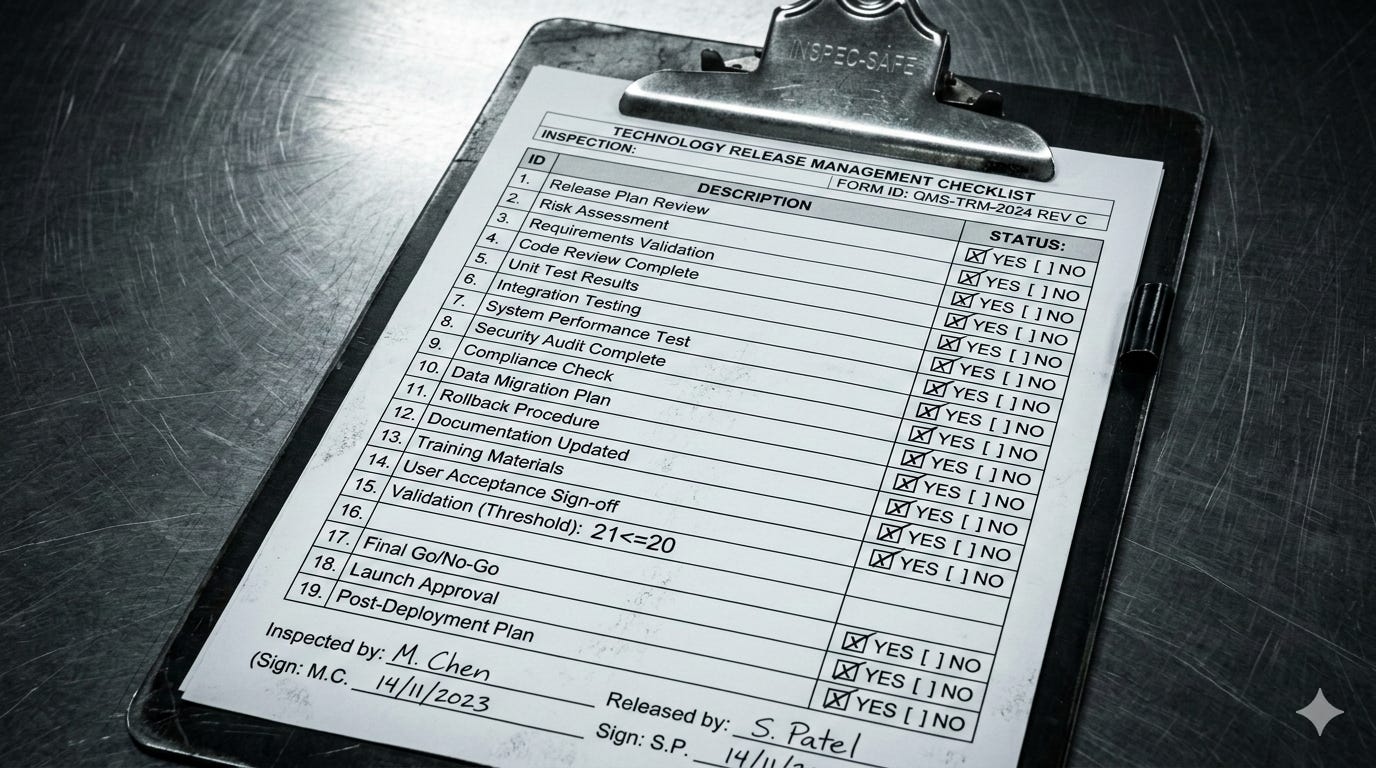

What happened was this: at 04:09 UTC, CrowdStrike — whose Falcon sensor protects nearly 60% of Fortune 500 companies — pushed a routine configuration update to Windows hosts. The update defined 21 input fields. The sensor code expected 20. No bounds check. The mismatch triggered an out-of-bounds memory read, and 8.5 million machines crashed into an unrecoverable boot loop. CrowdStrike identified the error within 79 minutes. It didn’t matter. Every one of those machines had to be fixed by hand — an IT worker booting each device into Safe Mode, deleting a single file. The fix took weeks. The estimated damage exceeded $10 billion.

The failure was not exotic. It was a mismatch between two components of CrowdStrike’s own system — one defining 21 fields, the other expecting 20 — with no mechanism to verify they agreed. That’s a knowable property of their own architecture, and their development process was structurally designed not to surface it. And that design failure — the absence of a structured way to generate the failure scenarios that feed the testing and validation systems every team already has — is not unique to CrowdStrike. It is the standard condition of software development everywhere.

The Testing Was There. The Failure Scenarios Weren’t.

The instinctive reaction to the CrowdStrike outage is that they didn’t test enough. That reaction is right. But the diagnosis most people reach — that CrowdStrike needed more testing — is wrong, and the way it’s wrong is the point.

CrowdStrike had a Content Validator — a dedicated component whose job was to check the integrity of updates before deployment. They had stress tests. They had QA processes. CrowdStrike’s root cause analysis confirms that earlier Channel File 291 updates, deployed between March and April 2024, passed through this infrastructure and “performed as expected in production.” The machinery worked. What failed was what the machinery was given to work with.

The Content Validator checked for structural conformance — but nobody fed it the condition “what if the sensor provides fewer fields than the template defines?” The stress tests used wildcard matching, verifying a narrower range of conditions than production actually permitted. And there was no staged deployment — the update went to every host simultaneously — because nobody surfaced blast radius as a scenario the deployment architecture needed to handle.

The testing infrastructure existed. The failure scenarios to feed it did not.

That gap is structural. The standard SDLC has robust mechanisms for verifying that things work: unit tests, integration tests, validators, code review. What it lacks is a structured process for generating the universe of ways things break. Sprint planning asks what are we building? Architecture review asks how does this work? QA asks does this pass the tests we wrote? Nobody’s job is to ask what tests should we have written but didn’t?

The result is predictable: the tests cover what the developers imagined, which is exactly the optimism-biased subset that humans reliably produce when nobody forces them to think about failure. In 1989, researchers at Wharton, Cornell, and the University of Colorado found that imagining an event has already occurred — prospective hindsight — increases the ability to identify reasons for future outcomes by 30% in laboratory settings. Decision researcher Gary Klein built on that finding to develop the pre-mortem, a technique for surfacing failure scenarios that optimism bias otherwise suppresses. Teams don’t lack the knowledge to anticipate failures. They lack a process that asks them to.

Two Design Changes

These aren’t new tools. They’re two structured ways to generate the failure scenarios that feed the testing and validation infrastructure your team already has. Each traces directly to a gap that the CrowdStrike incident made visible.

1. Make pre-mortems a gate — and route their output into your test suite.

The pre-mortem works because it flips the demand characteristic — instead of looking like a bad teammate for raising concerns, you’re showing how experienced you are by identifying risks.

Most teams that know about pre-mortems treat them as a facilitation exercise — optional, freestanding, disconnected from the build pipeline. That’s the wrong structural placement. A pre-mortem should be a required gate at two levels. At architecture approval, a pre-mortem on the Falcon sensor’s design would have forced the team to model the compound risk of holding kernel mode, boot-critical status, bypassed external certification, and simultaneous deployment at once. At the deployment pipeline, a pre-mortem on Channel File 291 would have surfaced “what if the content defines more fields than the sensor expects?” — a scenario that becomes a validator rule. The output of both isn’t a list of worries archived in Confluence. It’s test cases, validator rules, and deployment constraints that flow directly into QA.

2. Make system quality attributes an explicit, prioritized input to architecture — and model the compound risk when multiple constraints stack.

CrowdStrike’s design ambition was a maximally effective security sensor — one that can’t be disabled, loads before threats exist, responds to zero-days in minutes, and protects every host immediately. Achieving that goal required four properties simultaneously. Each one was a choice, not a structural inevitability. And each one carried a known risk profile.

The Falcon sensor runs in kernel mode — Ring 0, the same privilege level as the Windows operating system itself. Kernel mode provides maximum visibility into system behavior and tamper resistance against attackers who might disable a user-mode security tool. It also means any crash doesn’t kill a process. It kills the machine.

The Falcon driver is marked as a boot-start driver — loading before Windows finishes booting, ensuring protection is active before any threat can load. It also means Windows can’t fall back to a “last known good” configuration when the driver crashes, because the system considers it essential for boot.

The Rapid Response Content mechanism bypasses Microsoft’s external WHQL certification process entirely. Channel files are processed by the certified driver at runtime — binary code, as Microsoft VP David Weston put it, that “traversed Microsoft” without Microsoft ever seeing it, validated solely through CrowdStrike’s internal Content Validator. Speed of threat response in minutes instead of weeks of recertification. The cost: no external safety check on content running in the most privileged execution environment on the machine.

And updates deploy to every Windows host simultaneously — no canary rollout, no staged deployment — ensuring universal coverage the moment a threat definition ships. It also means the blast radius of a bad update is every machine, everywhere, at once.

None of these properties are unreasonable in isolation. Each exists across the industry. Each has a known risk profile that any architecture review would recognize.

But CrowdStrike’s design holds all four simultaneously. And the risk surface of holding all four isn’t additive — it’s multiplicative. A kernel-mode crash is recoverable if the driver isn’t boot-critical. A boot-critical driver is recoverable if its content goes through external certification. Internally-validated content is recoverable if deployment is staged. When you hold all four, you’ve removed every recovery mechanism. A single bad content update, validated only internally, running in kernel mode, on a boot-critical driver, deployed to every host at once — that’s the architecture that produced July 19.

With every constraint you add to a design, you become more accountable to testing for the failure modes that constraint creates. CrowdStrike added four constraints that each independently increased risk, and didn’t raise the testing bar for any of them. The Content Validator — the sole remaining safety mechanism — was never given the failure scenarios that the compound architecture demanded.

System quality attributes — the “-ilities” like reliability, recoverability, fault tolerance, deployability, testability — are a formal architectural taxonomy that gives teams a structured vocabulary for this conversation. The design change: require explicit prioritization of quality attributes during architecture or design review. Which qualities are you designing for? Which are you accepting risk on? When multiple risk-bearing properties stack, map every constraint. For each one, name what recovery mechanism it removes. When the map shows no recovery path remaining, that’s where your testing investment must be highest — because the architecture has left no room for error.

And for each constraint, ask one more question: what detection do we currently have for the failure modes it creates? If the answer is none, you’ve found your next test. CrowdStrike’s Content Validator never checked the template’s field count against the sensor’s input contract — a producer and consumer disagreeing on a schema, which is a class of error with well-understood solutions. Serialization formats like Protocol Buffers and Avro handle this explicitly. The practice of verifying that two components agree on their interface — contract testing — is mature enough that the absence of any such mechanism in CrowdStrike’s content pipeline is itself a design failure the compound risk analysis would have surfaced.

The pre-mortem surfaces what the team can imagine. The quality attributes taxonomy surfaces the compound risk that no individual would model alone. Together, they produce the failure scenarios your testing infrastructure is waiting for.

The Honest Tradeoff

These changes add time. Pre-mortem gates add a meeting. Quality attribute prioritization adds a planning step. A team running both on every significant design will ship slower in the short term.

The honest argument isn’t that prevention is free. It’s that the cost of the failures teams are currently shipping — the $10 billion outage, the canceled surgeries, the psychiatrist writing prescriptions by hand — exceeds the cost of generating the failure scenarios that would have fed the testing systems that already existed. The QA infrastructure was there. The inputs weren’t. These two changes produce the inputs.

The natural home for this work is the architecture or design review — the point where structural decisions are made and where compound risk becomes visible. Someone in that room needs to own the question: what failure scenarios does this design demand that we haven’t generated yet? That’s not every outage prevented. But on July 19, 2024, the testing machinery was ready. Nobody’s job required them to feed it the scenario that mattered — what happens when a template expects 21 fields and a sensor sends 20.